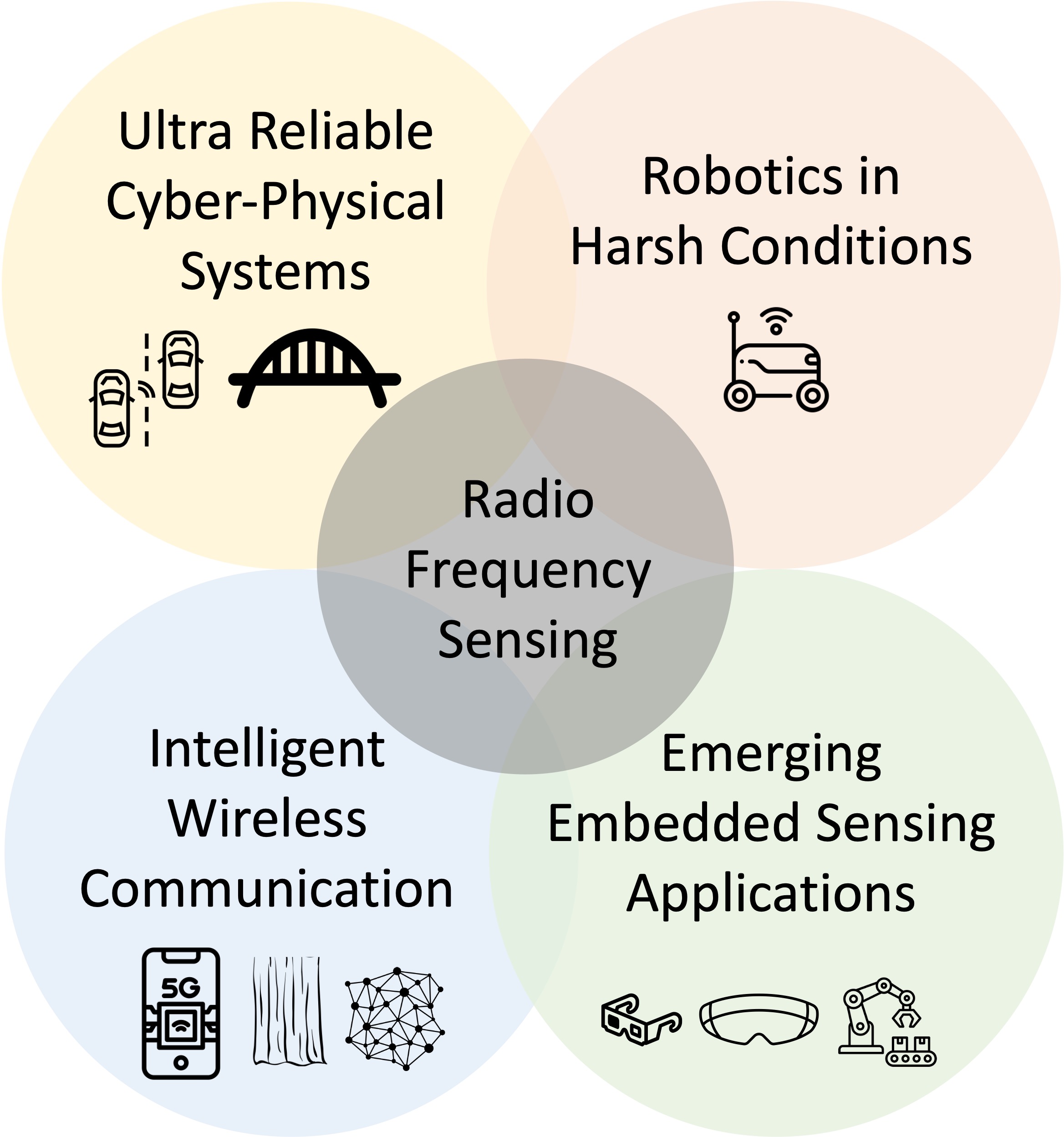

My research builds fundamental principles and practical systems that define the next generation wireless perception and communication landscape. My research takes a full-stack approach building novel embedded hardware, machine learning and signal processing techniques, compute accelerators, and architecting end-end systems that manage constraints on communication, real-time latency and reliability. I am interested in pushing the limits with wireless to uncover new capabilities in robotics, automotive, and health. For critical use-cases, I employ cyber-physical systems principles to create stable interaction loops of wireless-powered machines with the world.

Learning based RF perception

RadarSim

Neural radiance fields relying on just radar scans are limited by the effective spatial resolution of a radar scan. Neural radiance fields with camera scans can generate good 3D world understanding. Can we leverage cameras to perform superior radar novel view synthesis? RadarSim presents multimodal neural radiance fields with camera and radar. It achieves a superior geometry understanding, and consequently, superior radar novel view synthesis.

Full Paper

GRT

GPT for radars? GRT! mmWave radars have great potential for robotic perception. Learning approaches (such as RadarHD) that address fundamental limits of mmWave radars have demonstrated initial hope for high fidelity perception. But, such efforts have largely been on small datasets that are task-specific and trained from scratch. This paper takes learning on radar data to new levels. We present the largest (publicly available) raw radar dataset, an open-source data collection tool chain, a foundational model-esque training that scales to several perception tasks and a scaling law that answers how much more data is needed to fully exploit the power of a foundational model.

Full Paper, Website, Video

ICCV Oral

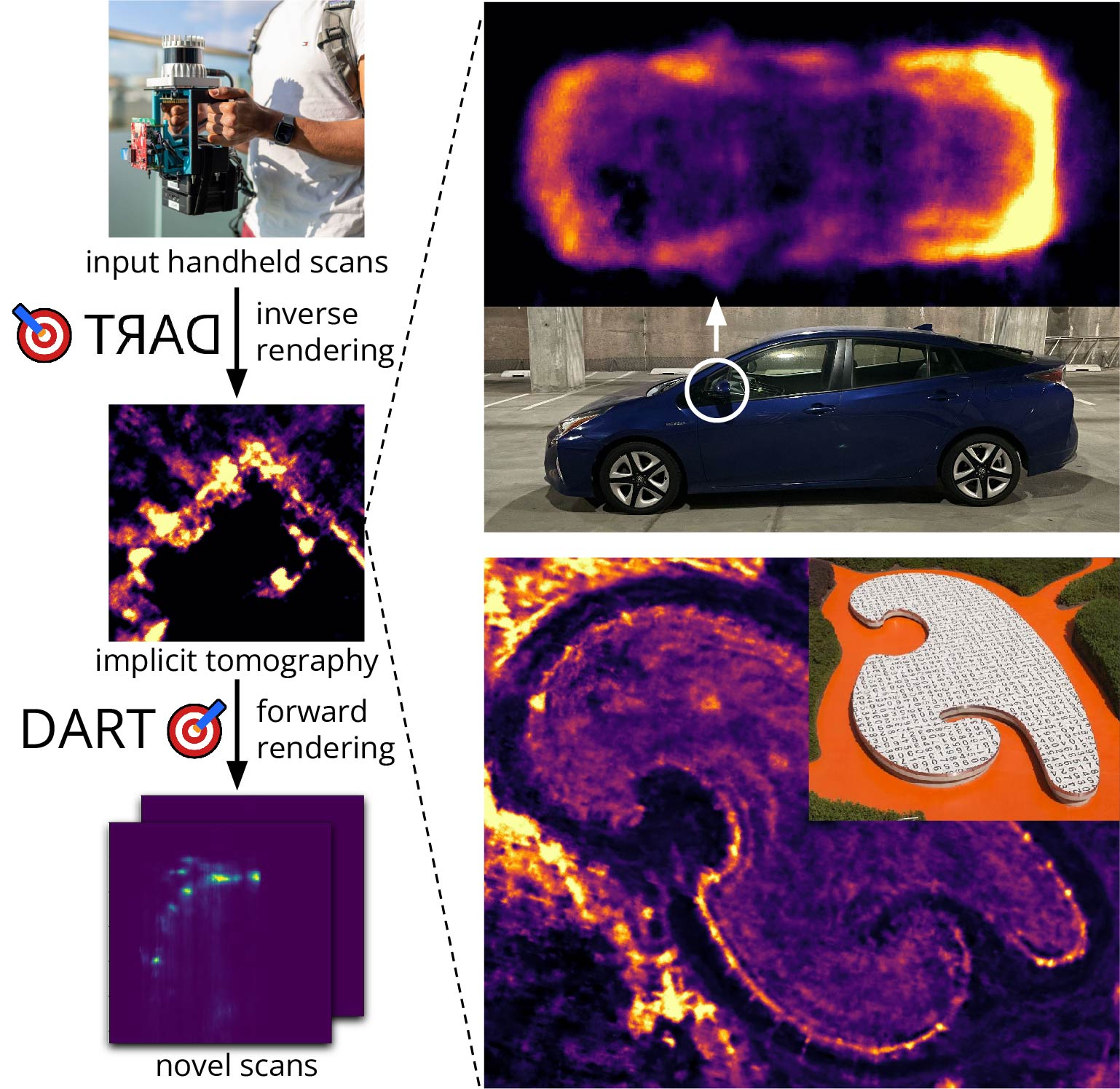

DART

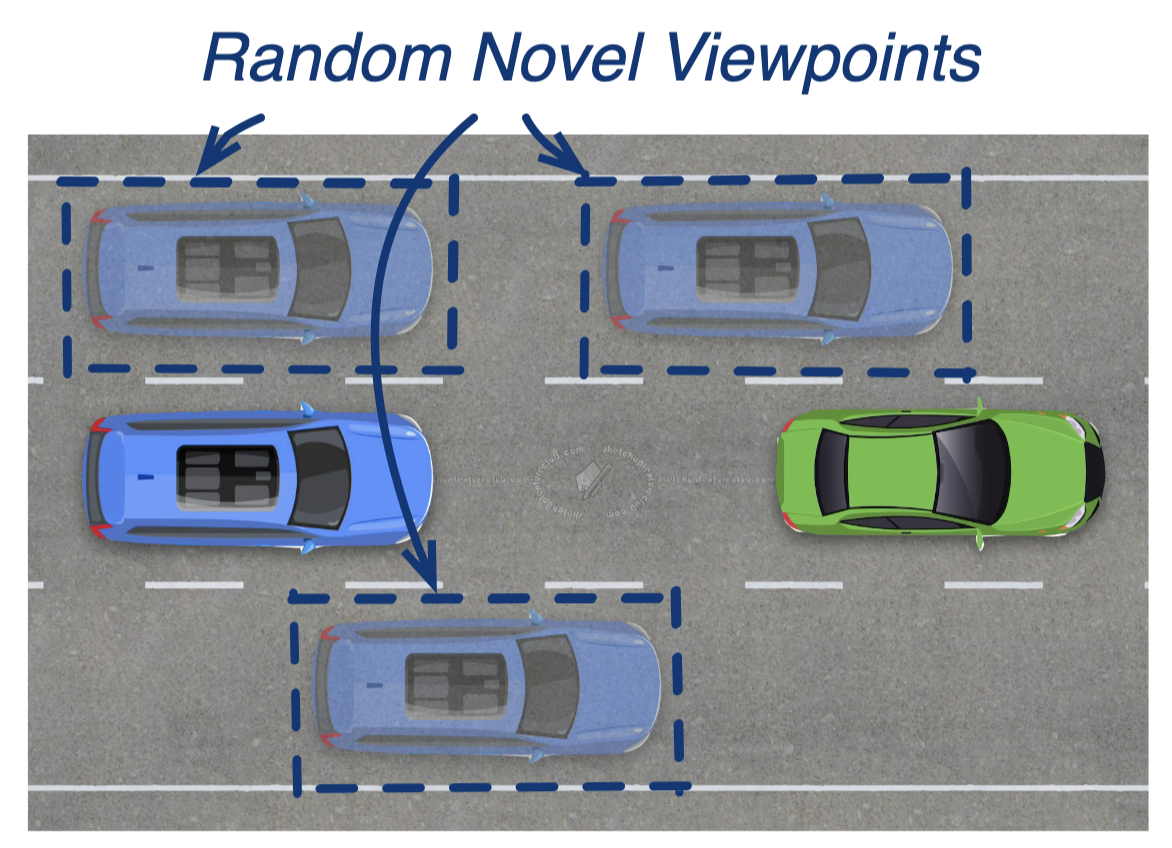

Realistic radar simulation requires careful 3D modeling of a virtual world with accurate material properties and modeling all types of EM wave interactions with it. Previously we have seen explicit approaches for direct modeling or radar imaging followed by simulated view rendering. These explicit approaches are hard to design for practical, crowded scenes with heterogenous material composition and challenging multipath interaction. We propose an implicit neural rendering approach to produce accurate simulation of radar measurements from novel view points. As a by-product of our approach, we are also able to produce high quality radar images of scenes, akin to synthetic aperture imaging, but without any explicit modeling.

Full Paper,

Code,

Dataset,

Video

CVPR Oral

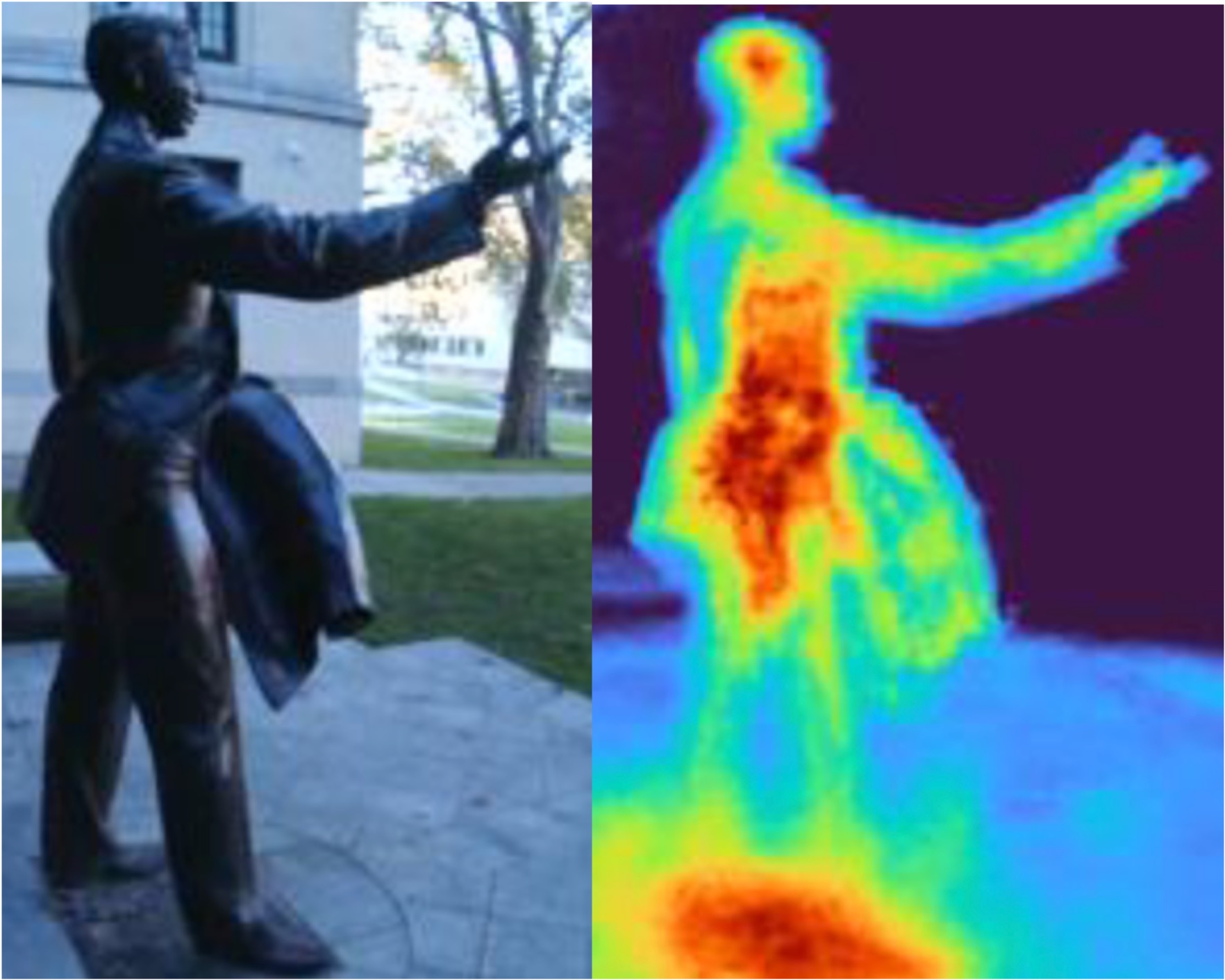

RadarHD

How can we enable high quality perception for robots navigating in harsh environments with smoke or fog? Camera or lidar based perception would suffer in these conditions. We explore a millimeter wave radar based perception for seeing past these occlusions. We combat the poor spatial resolution of these radars by training an end-to-end deep learning super-resolution network that outputs lidar-like point clouds from just a cheap, single-chip radar! We also show RadarHD's robustness in smoke by testing with smoke bombs in a smoke chamber.

ICRA Paper,

Extended Paper,

Demo Link,

Slides,

Talk,

Poster,

Code and Dataset

Top-5 Demos (MobiCom 2023)

Physics based RF imaging

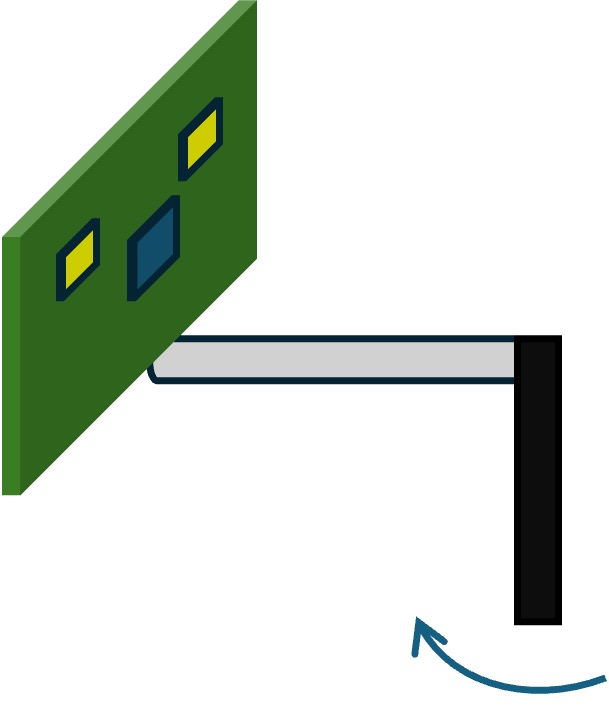

Umbra

How can we enable high resolution imaging at RF without large arrays or antenna motion via Synthetic Aperture Radar (SAR)? Umbra builds inverse pinhole imaging that enables a static single antenna to achieve high resolution, high update rate imaging. All we need to add to a single antenna setup is a lightweight strip that is spun by a low cost DC motor. We show that this emulates an "inverse pinhole" and offers extra information that can boost resolution. Static mount applications like pole/wall mount radars and in-place hovering drones can directly benefit from this approach.

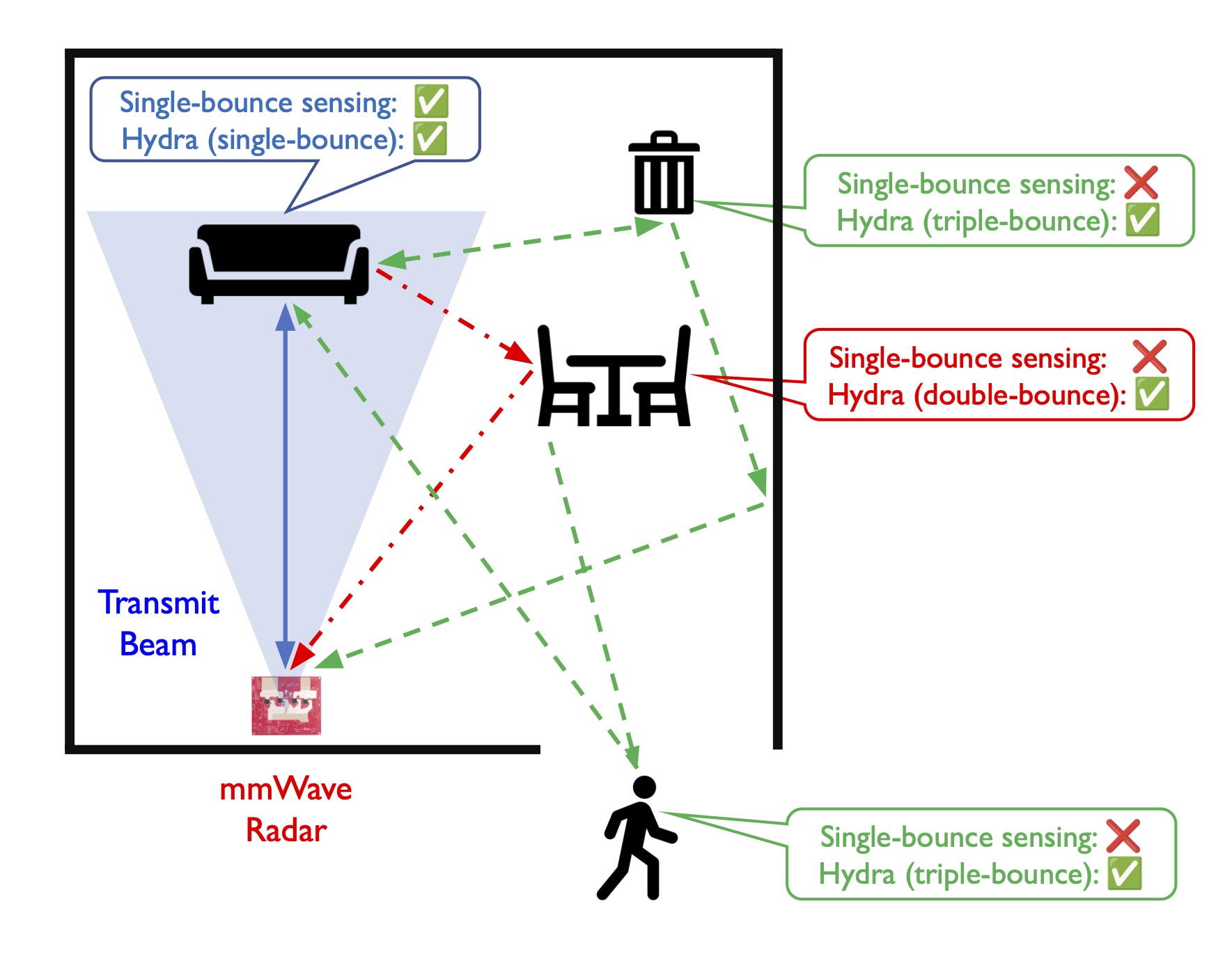

Hydra

Conventional radar processing only allows estimating the spatial locations of objects in the incident field of view. But, in practice, the radio waves bounce off objects in the field of view and illuminate hidden objects. Can we leverage this multi-bouce effect and image scenes beyond the field of view of a typical mmWave radar? We propose algorithms that can tackle double and triple bounces to expand the field of view to even diametrically opposite to the incident beam!

Full Paper

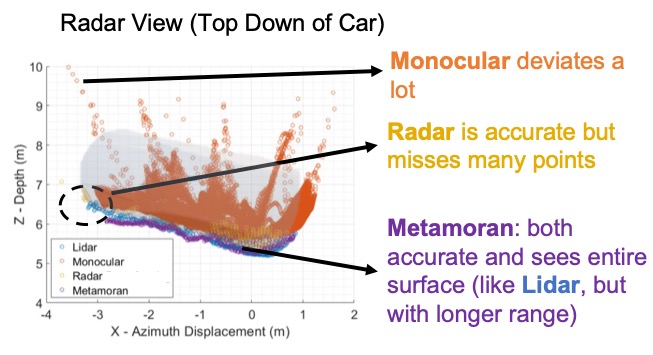

Metamoran

How can we enable single vantage point depth imaging at long ranges to build simple-to-deploy but high quality survelliance systems? Metamoran proposes a monocular camera-radar fusion solution that leverages the pros of each sensor to combat the cons and provides an accurate depth image of various objects even at long ranges.

Full Paper,

Slides,

Talk

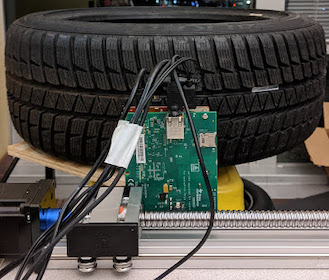

Osprey

Tire wear affects safety and performance of automobiles. Past solutions are either inaccurate, require sensors embedded in tires or are prone to road debris. Osprey proposes a radio frequency tire wear sensing system design, based on automotive millimeter wave radars, to overcome challenges related to road debris. We design super resolution algorithms to measure millimeter-level changes due to wear and tear. In addition, the principles used also open up solutions for detecting and localizing harmful metallic foreign objects in the tire.

Full Paper,

Demo Paper,

Magazine,

Talk,

Slides,

Video-1min,

Video-3min

Press: CMU,

Gizmodo,

Hackster.io,

That's Cool News Podcast,

Weibold,

Interesting Engineering,

Wonderful Engineering,

Tyrepress.com

Best Paper Honorable Mention (MobiSys 2020)

Best Demo (MobiSys 2020)

ACM GetMobile Research Highlight

Wireless + robotics

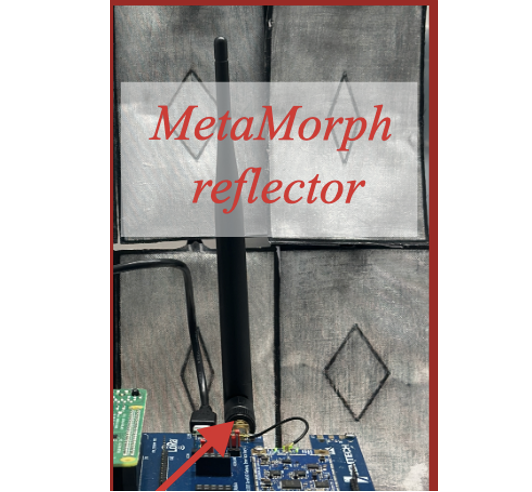

Metamorph

Can inflatable robots enhance wireless communication? We show that by placing an array of shape-morphing inflatable robots near an antenna we can boost the signal quality received at the antenna. Our methodology is best suited for base stations that need to adapt to slow moving changes --- such as seasonal effects. For a Low-Power Wide Area Network link, we show that deploying this at the gateway can have significant improvements to signal quality and yields huge battery savings.

Full Paper,

Code

Avatars

How can we maximize rendezvous opportunities for autonomous robots with sperm whales? We propose an autonomy and sensing module to this end. The sensing module on a drone performs a VHF synthetic aperture radar based whale positioning when the whale surfaces and uses acoustic sensors to track whales under water. Using the sensing module's data, we build a reinforcement learning algorithm that plans where autonomous robots should be dispatched to maximize chances of whale rendezvous.

Full Paper

Wireless + health

PolyPulse

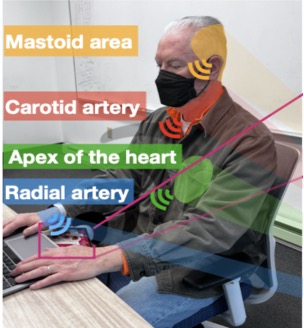

Radars have been used for non-contact heart rate monitoring. Can we push the boundaries by measuring heart-induced signals at multiple sites on the body? This would address physiological monitoring needs such as pulse transit time measurement and blood pressure monitoring - both of which needs contact probes today. PolyPulse builds fine-grained beamforming to focus on multiple sites and a deep learning pipeline to convert radar reflections into heart-induced signals at respective sites. We observe strong correlation with contact based pulse transit time and diastolic blood pressure.

Full Paper,

Code

Backscatter

Platypus

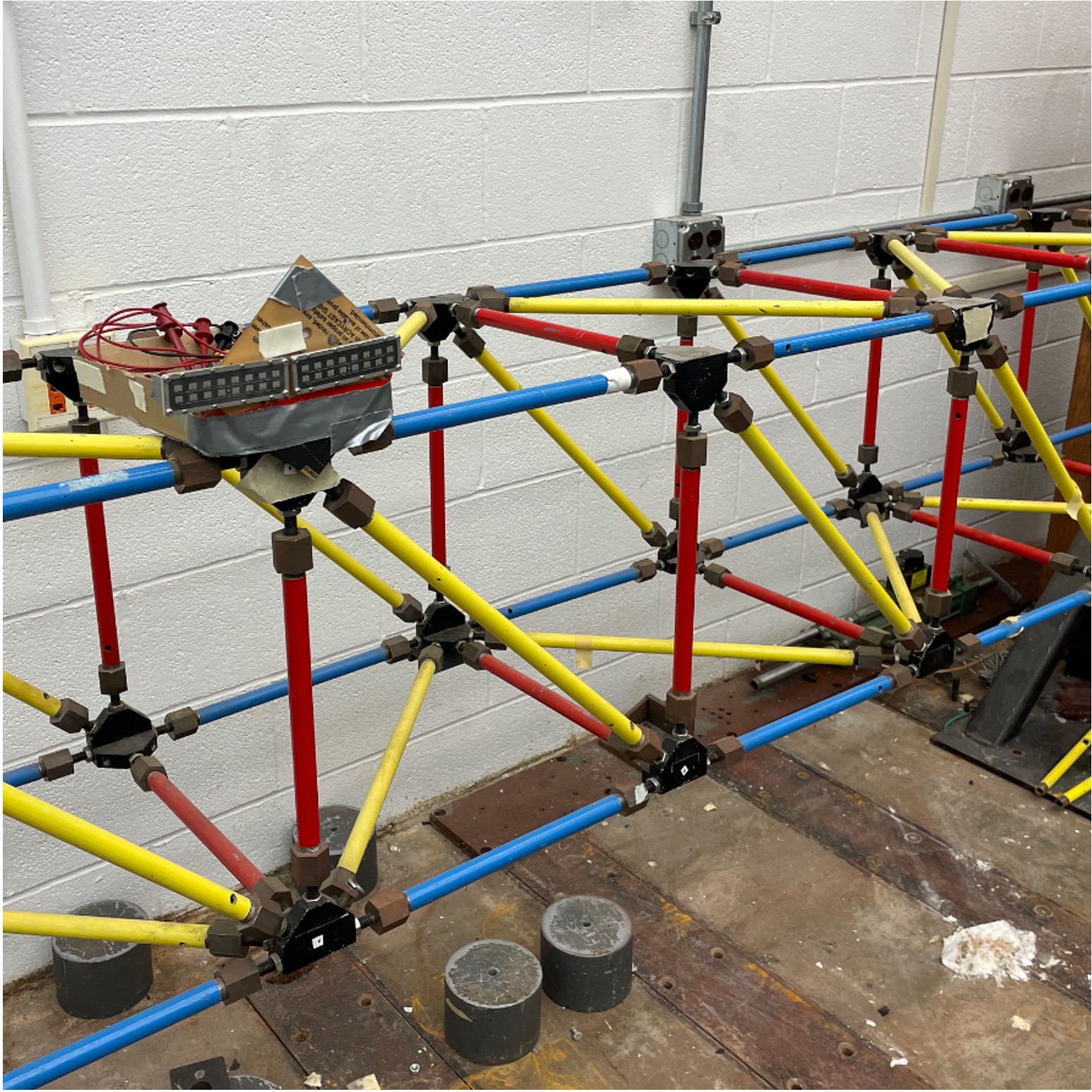

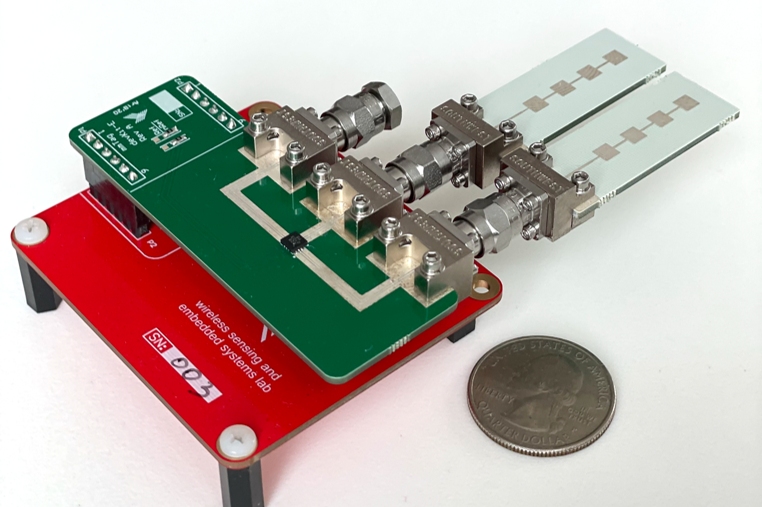

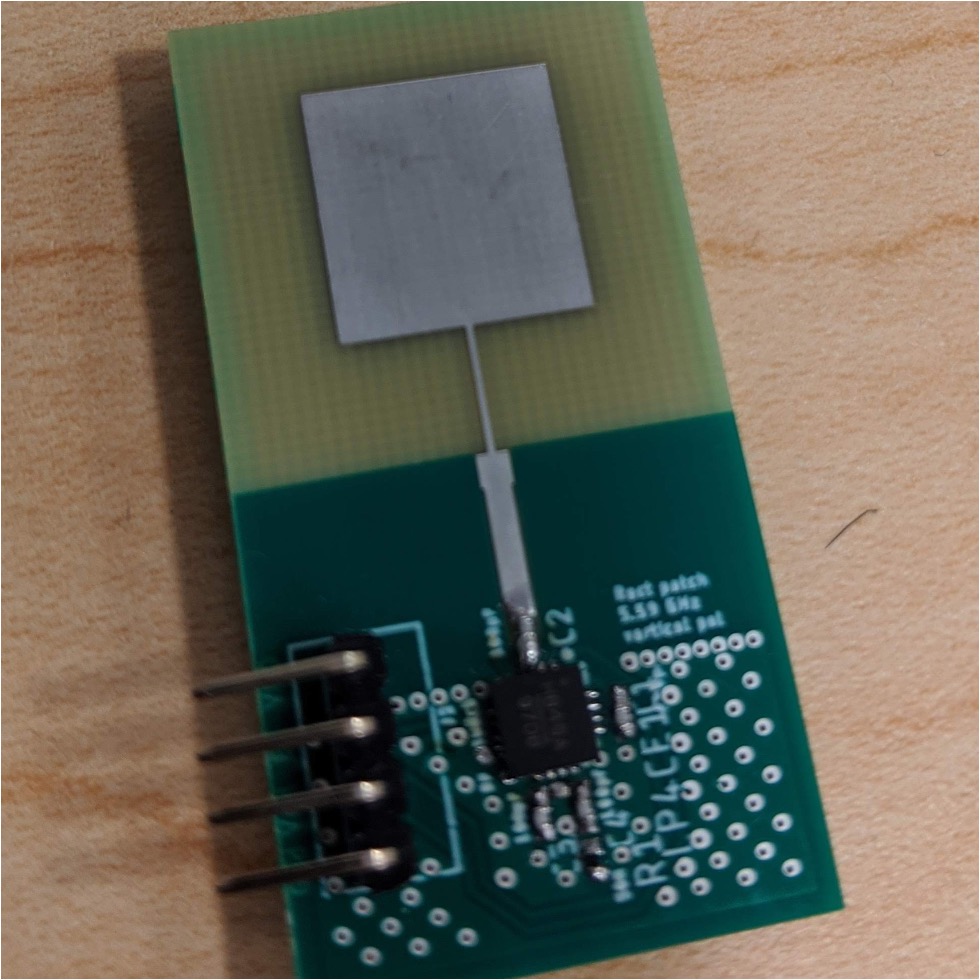

How can we continuously monitor the development of miniature cracks in today's aging public infrastructure like bridges? This would inform decisions on safety and maintenance of these critical structures well in advance. Past work has looked at coarse grained structure health monitoring. Platypus builds novel signal processing and low power hardware tags to mount on bridges. We enable a new operating point that can measure micro-displacements of structures over years and in extreme weather conditions.

Full Paper,

Demo Paper,

Video

Best Demo Runner Up (IPSN 2023)

Millimetro

How can cars perceive critical roadside infrastructure even when weather conditions are not favorable for visual sensors? Millimetro tackles this by designing low power radio frequency tags that can be mounted on such infrastructure and builds decoding algorithms that run on radars in cars to decode, identify and accurately localize tags, and thus perceive critical infrastructure.

Full Paper,

Demo Paper,

Talk

Press: UIUC,

Pioneering Minds

Best Demo Runner Up (MobiCom 2021)

TagFi

How can we locate important and often misplaced objects-of-interest like keys, wallets, tools? Prior works either need power hungry tags attached to these objects or custom supporting infrastructure to manage tags. We turn to already existing and widely deployed WiFi networks for infrastructure support and build custom extremely low power tags. The user can simply forget about the tag for years. Our algorithms can locate objects with fine-grained accuracy and provide seamless context awareness with just unmodified WiFi.

Full Paper,

Talk,

Demo Video

Emerging frontiers

Sharp

Recently, we are witnessing systems research building testbeds that share raw spatial sensor data for cooperative perception. While raw data improves accuracies, new forms of privacy concerns arise and discourage stakeholders to share raw sensor data. We alleviate these concerns by proposing methods to address privacy leakages pertaining to ego-location and sensor intellectual property.

Full Paper

Quasar

Low Earth Orbit cubesats and nanosats satellites have paved the way for missions with cheaper launch costs. But, ground infrastructure for communication remains bulky and expensive. Quasar proposes to alleviate this problem by democratizing access to satellite transmissions at the cost of a few RTL-SDRs. The key idea behind Quasar is to leverage a network of cheap radios to mimic one single expensive radio. Quasar achieves this by tackling challenges with respect to synchronizing the cheap radios, dealing with high doppler spread and central aggregation of high bandwidth raw radio streams.

Full Paper,

Video

ACM GetMobile Research Highlight